The LLM Wiki pattern changed how I think about notes, documents, and AI. I’ve been running four different productivity frameworks in parallel for years, and they’ve been politely ignoring each other like strangers at a dinner party. PARA held my folders. Zettelkasten held my ideas. GTD held my tasks. Readwise held my highlights. And I held my breath, hoping it would all connect someday.

It finally did. But not the way I expected.

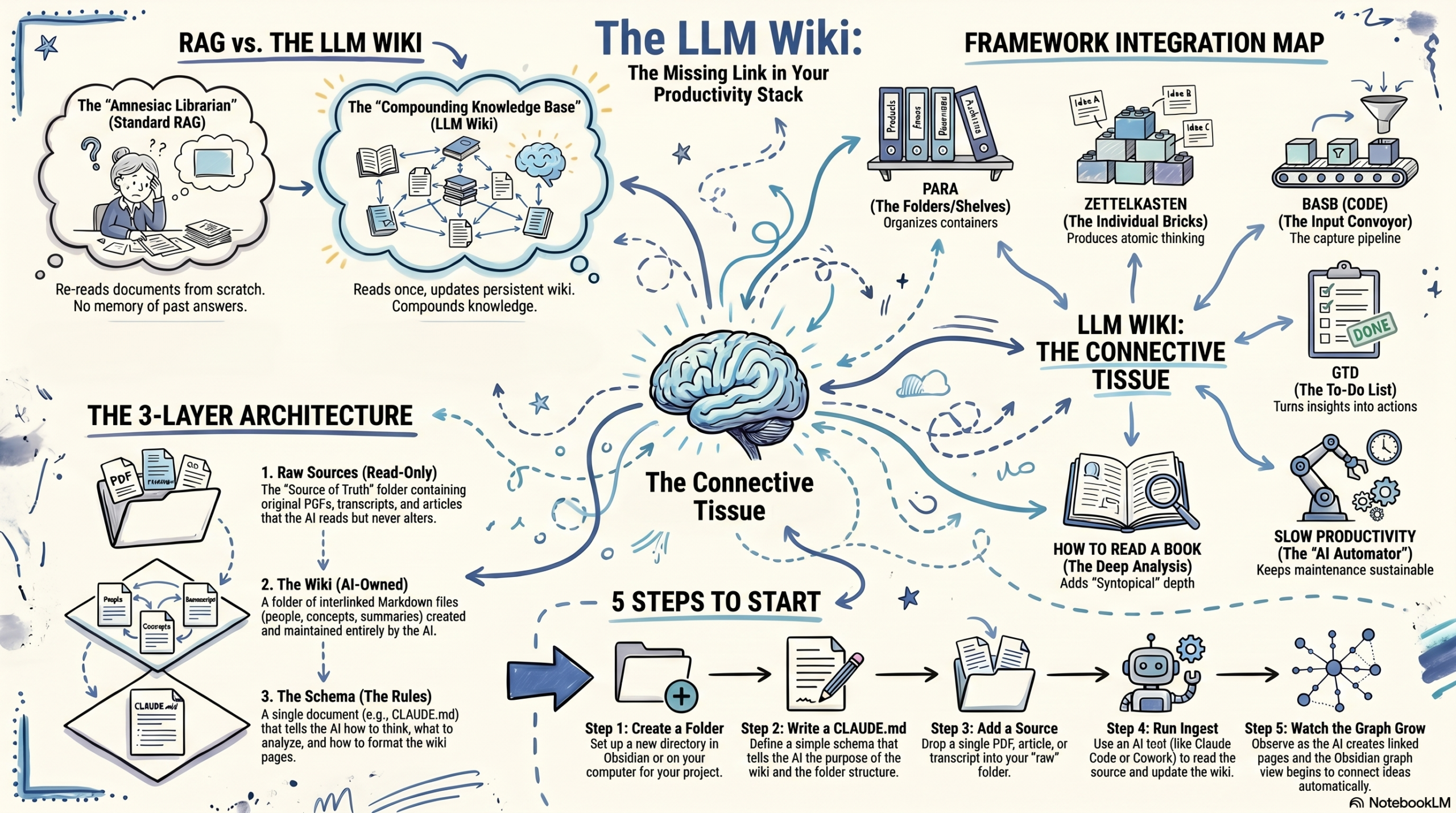

Andrej Karpathy (co-founder of OpenAI, former AI director at Tesla, occasional poster of ideas that rearrange your brain) published a pattern he calls the LLM Wiki. The short version: instead of making AI re-read your documents every time you ask a question, you have the AI build and maintain a persistent wiki from those documents. The knowledge compounds. The connections stay. Nothing starts from scratch.

However, the long version is this blog post. Grab your favorite mug: coffee, tea, or something more mysterious. I’m not here to judge (well, maybe just enough to keep things interesting).

🤔 The problem the LLM Wiki solves

Here’s what happens when you upload files to ChatGPT, NotebookLM, or any RAG (retrieval augmented generation) tool: you ask a question, it searches your files, pulls out some relevant chunks, stitches them together, and gives you an answer.

That works. Once.

Ask a similar question tomorrow, and the AI does all of that work again from scratch. It retains no memory of yesterday’s answer. There’s no accumulated understanding. In effect, every question is a first date with your documents.

In other words, if you’ve ever tried to connect ideas from five different PDFs, three podcast episodes, and that one article you bookmarked at 11pm, you know this is exactly the kind of thing AI should be good at. But with RAG, the system has to find and reassemble those connections every single time. Think of it as a librarian with amnesia who’s great at finding books but can’t remember that you were here yesterday asking about the same topic.

🛠️ Karpathy’s LLM Wiki fix (and why it clicked for me)

Karpathy’s idea is simple enough to explain in one paragraph and, more importantly, powerful enough to restructure how I work.

Instead of searching raw documents every time, you have the AI read your documents once and build a structured wiki from them. When you add a new source (a PDF, a blog post, a transcript, a podcast summary), the AI doesn’t just file it away. Rather, it reads the source, extracts key ideas, updates existing wiki pages, creates new ones for new concepts, links related ideas together, and flags contradictions.

As a result, the wiki keeps growing. The synthesis is already done before you ask your next question. When you do ask, the AI pulls from compiled, cross-referenced knowledge instead of a pile of unprocessed files.

His framing stuck with me: Obsidian is the IDE. The LLM is the programmer. The wiki is the codebase. You don’t write the wiki. The AI writes the wiki. You decide what to feed it and what to ask it.

(If you’ve never used Obsidian, that’s fine. It’s a free note-taking app that works with plain markdown files. Think of it as a really smart folder of text files with a pretty graph view. You’ll see.)

📚 The LLM Wiki has three layers (and yes, that’s it)

The whole architecture is just three folders:

Raw sources: your original documents. PDFs, articles, transcripts, clipped web pages, whatever you’re working with. These are sacred. Read-only. The AI reads them but never touches them. Consider this your source of truth.

The wiki: a folder of markdown files that the AI creates and maintains. Entity pages for people and organizations. Concept pages for ideas and frameworks. Source summaries. Comparative analyses. Timelines. An index. All interlinked with [[wikilinks]]. The AI owns this folder entirely. You read it. The AI writes it.

The schema: a single document that tells the AI how to operate. What’s the wiki about? What does the folder structure look like? How should pages be formatted? What should happen when a new source arrives? If you’re using Claude Code, this file is called CLAUDE.md and it gets read automatically when you open the project.

In short, that’s all it takes: three folders and one rules document. If you know how to click ‘New Folder,’ you’re already overqualified.

❓”But Courtney, why do you have ELEVEN of them?”

Fair question. Admittedly, I may have gotten a little carried away. (My ADHD brain heard “persistent compounding knowledge” and responded with “YES, FOR EVERYTHING.”)

But actually, there’s a real reason. I work across wildly different domains: open source policy, theology, developer relations, cross-CMS infrastructure, neurodiversity research for my family, personal wellness, and more. Each domain has its own vocabulary, its own source types, and its own rules for what counts as a good analysis.

For example, a theology source needs to identify which tradition it comes from. Meanwhile, a competitive intelligence source needs a signal confidence rating. And a neurodiversity source needs to note which family member it applies to. Clearly, one wiki can’t hold all of those rules without turning into a mess.

Because of this, I built one wiki per domain, each with its own tailored schema. They all live in my Obsidian vault under 1. Projects/. They all follow the same three-layer architecture. But each one thinks differently about its sources.

You absolutely do not need eleven. Start with one: just one topic where your knowledge is playing hide-and-seek across too many places. The rest can wait, or simply never happen. Trust me: one LLM Wiki that grows is infinitely better than eleven imaginary ones.

🗂️ My 11 LLM Wiki topics (because you’re going to ask)

- WordPress & Open Source Governance: contributor programs, foundation relationships, legal conflicts, policy decisions

- Theology, Deconstruction & Faith Formation: my journey from charismatic traditions toward progressive Christianity

- FAIR Project & Cross-CMS Infrastructure: the Linux Foundation project connecting WordPress, Drupal, Joomla, and Typo3

- DevRel & Competitive Analysis: developer relations programs across the hosting and CMS industry

- Open Source Sustainability & Economics: funding models, maintainer health, OSPO structures, contributor economics

- Neurodiversity & Parenting: research and strategies for our neurodivergent family, connecting therapy approaches to nervous system science

- Product Intelligence: product portfolio tracking and developer experience mapping

- Personal Brand & Public Speaking: conference talks, blog strategy, content pillars, voice development

- WordPress Training & Curriculum: learning pathways, instructional design, contributor onboarding

- Wellness, ADHD Management & Nutrition: fitness, nutrition science, and ADHD strategies that survive low-executive-function days (if you know, you know)

- PKM & Productivity Tooling: the meta-wiki that tracks how all the other wikis work (yes, I built a wiki about my wikis, no I’m not sorry)

🔍 What makes each LLM Wiki actually different

Surprisingly, this is the part that caught me most off guard. The schema (that rules document) makes an enormous difference. It’s not just “please make wiki pages.” It’s specific instructions for how the AI should think about each domain.

My theology wiki requires every claim to name its theological tradition (progressive, evangelical, mainline, academic). It also flags manipulation patterns when analyzing materials from high-control groups. Beyond that, it maintains a running evaluation of worship music for my church’s music team, tracking which songs pass theological review, which don’t, and why.

Similarly, the neurodiversity wiki tags every page with which family member it applies to. Every concept page ends with a “What this means for us” section, because a research paper is only useful if it turns into something actionable at 7am on a Tuesday.

On the other hand, the DevRel wiki rates competitive intelligence by confidence level (strong, moderate, weak) and generates experiment briefs. It automatically flags anything older than 90 days as potentially stale, because this industry moves fast.

Then there’s the wellness wiki, which knows what fitness equipment I own, rates every strategy for ADHD sustainability (will I actually keep doing this on a bad brain day?), and classifies nutrition content by THM fuel type. Because a wiki that doesn’t know your constraints can’t give you useful advice.

Ultimately, the schema is where you teach the AI how you think about this domain. The better your schema, the smarter the wiki. And importantly, the schema evolves as you refine it over time.

🤝 The LLM Wiki: where all my productivity frameworks finally talk to each other

Here’s what nobody’s written about yet. The LLM Wiki isn’t just an AI trick. It’s the missing layer that connects PARA, Zettelkasten, GTD, and Building a Second Brain into a single working system.

I’ve been running all of these for years. They were never fully integrated. They all had their own territory, like neighbors who wave but never come over for dinner. The LLM Wiki is the dinner party.

📦 PARA gives you the containers

Tiago Forte’s PARA method (Projects, Areas, Resources, Archives) answers where does this go? In other words, it’s a filing system. A really good filing system. But filing isn’t synthesizing.

Specifically, each wiki lives under Projects. It reads from Areas and Resources but never writes to them. PARA holds the structure. The LLM Wiki does what PARA never could: connect ideas across folders.

🧠 Zettelkasten gives you the thinking

Niklas Luhmann’s Zettelkasten, beautifully explained in Sönke Ahrens’ How to Take Smart Notes, gave me the habit of writing atomic notes. Essentially, it’s one idea per note with dense links between them. Fleeting notes capture raw thoughts. Literature notes process what I read. Permanent notes hold durable insights.

Currently, my vault has 400+ atomic notes. They’re well-structured and they link to each other. Yet for years they sat there, quietly being correct but not actively working for me. Now the LLM Wiki reads my entire Zettelkasten layer as source material during its daily scan, identifying which atomic notes are relevant to which wiki and weaving their insights into the compiled knowledge.

Ahrens wrote that the payoff of note-taking should be exponential as connections accumulate. In theory, he was right. But maintaining those connections by hand? That’s the part where every Zettelkasten practitioner eventually stalls. The AI, on the other hand, does not stall.

✅ GTD gives you the actions

David Allen’s Getting Things Done answers what do I do next? I run GTD through Things 3. Projects, next actions, weekly reviews, the whole deal.

GTD doesn’t manage knowledge. Instead, it manages commitments. But knowledge work generates commitments constantly. When a wiki lint check flags contradictions between two pages, that’s a next action. If a daily scan surfaces a source that needs deeper reading, that becomes a project. And once an analysis page reveals a research gap, that’s a someday/maybe.

Allen said your mind is for having ideas, not holding them. To take that further: your mind is for asking good questions, not maintaining cross-references.

👩💻 Building a Second Brain gives you the pipeline

Forte’s Building a Second Brain framework (CODE: Capture, Organize, Distill, Express) gave me the pipeline from raw input to finished output. Specifically, Readwise captures my highlights. PARA organizes them. Then progressive summarization distills them.

The LLM Wiki turbocharged the Distill stage. Previously, progressive summarization was manual: bold the key passages, highlight the bolds, and write a summary. I’d do it for maybe 10% of what I read. Meanwhile, the other 90% sat unprocessed, theoretically available but practically invisible.

Now, as a result, every source gets distilled automatically. The AI reads the full text, extracts key claims, creates a summary, and links it to entity and concept pages. That’s progressive summarization at machine speed, across everything, not just the sources I had time for.

🐢 Slow Productivity provides the permission

Cal Newport’s Slow Productivity says: do fewer things, work at a natural pace, obsess over quality.

To be clear, I do not maintain 11 wikis. The AI maintains 11 wikis. I maintain zero. I read things I would read anyway and highlight what interests me. The wikis feed themselves from my normal workflow. In essence, Slow Productivity means the system should not demand extra effort from you. This one does not.

📖 How to Read a Book provides the depth

Here’s one for the deep readers. Mortimer Adler’s How to Read a Book describes four levels of reading. The highest level, syntopical reading, means reading multiple sources on the same topic and constructing an analysis that none of them individually contain.

That’s exactly what the LLM Wiki does on every ingest. Five articles about open source governance enter the wiki. The AI doesn’t just summarize each one. Rather, it identifies agreements, contradictions, gaps, and questions none of the sources asked. Syntopical reading used to be something only researchers with months of time could do. Now it happens overnight.

🧩 How the LLM Wiki connects them all

| Framework | What it does | Where it lives |

|---|---|---|

| PARA | Organizes everything into containers | Obsidian vault folders |

| Zettelkasten | Produces atomic, linked thinking | 3. Resources/ subfolders |

| BASB | Captures and progressively distills | Readwise → Snipd → Obsidian |

| GTD | Turns insights into actions | Things 3, fed by wiki lint |

| LLM Wiki | Synthesizes across all of the above | 1. Projects/llm-wiki-*/ |

| Slow Productivity | Keeps the whole thing sustainable | The AI does the maintenance |

| How to Read a Book | Adds syntopical depth to every source | Wiki analysis pages |

The LLM Wiki doesn’t replace any of these frameworks. It’s the connective tissue that makes them a system instead of a collection. PARA organizes. Zettelkasten thinks. BASB captures. GTD acts. The wiki synthesizes. And Slow Productivity keeps you sane.

🔑 The vault-aware trick that made the LLM Wiki actually work

Karpathy’s pattern assumes you manually drop files into a raw/ folder. That’s fine for a fresh start. But I’ve been taking notes in Obsidian for years. My vault already had Readwise syncing article highlights, Snipd syncing podcast clips, hundreds of Zettelkasten notes, sermon notes, Bible study notes, and a full PARA structure.

So each wiki’s schema tells the AI where to look beyond the raw folder. Essentially, every LLM Wiki can read (never write) from my existing vault:

- 2. Areas/ for ongoing responsibilities and interests

- 3. Resources/ for Readwise highlights, Snipd clips, atomic notes, literature notes, and fleeting notes

- 5. Inbox/ for unprocessed captures

Consequently, my regular reading workflow (highlight in Readwise, clip in Snipd) feeds the wikis automatically. No extra steps. No special exports. The notes I was already writing became raw material for something bigger.

If you already have an Obsidian vault with any amount of content, you’re not starting from zero. Your vault is the raw material. Your LLM Wiki compiles it. If you’re curious what this looks like in practice, I publish a large portion of my vault publicly at publish.obsidian.md/courtneyr-dev, including Readwise article highlights, book notes, and sermon notes. You can browse it to see what raw sources look like before the LLM Wiki processes them.

📱 Beeper: the part that made my friends say “wait, what?”

This is where it gets a little wild. Stay with me.

I use Beeper to aggregate dozens of Slack workspaces and Discord servers into one app. Open source communities, WordPress channels, DevRel groups, and project-specific servers all live in one unified inbox.

Seven of my eleven wikis run a daily Beeper scan. In practice, the AI reads all group chat messages from the last 24 hours across every connected workspace. Then it uses judgment (not keyword matching) to identify what’s relevant to each wiki’s domain.

A conversation about a foundation policy change? The governance wiki picks it up. Someone debating maintainer compensation models? The sustainability wiki files it. A thread about a new translation platform? The FAIR wiki captures it.

After all, Slack conversations are the most ephemeral form of institutional knowledge. They scroll past, they disappear into history, and three months later, nobody can find that one thread where the important decision was made. The LLM Wiki makes them durable.

(If you don’t use Beeper or Slack, feel free to skip this part entirely. The wikis work great with just vault sources. The Beeper layer is simply a bonus for people drowning in chat channels.)

🕒 Daily automated maintenance

Each wiki runs as a Claude Cowork project with a scheduled task. As a result, every morning each wiki:

- Scans the vault for new or modified content

- Scans Beeper for relevant conversations (7 of 11 wikis)

- Ingests everything relevant by creating and updating wiki pages

- Lints the wiki by finding orphan pages, dead links, contradictions, and stale content

- Writes a summary of what changed

I wake up to wikis that grew overnight. My reading, my conversations, and my research are all synthesized while I slept.

Of course, if you’re not ready for automated scheduling, that’s completely fine. You can do everything manually: drop a source in raw/, tell the AI to ingest it, and watch the pages appear. Scheduling is the luxury version. Manual is where everyone starts.

🚀 How to build your first LLM Wiki (the un-overwhelming version)

You don’t need 11 wikis. Beeper integration and scheduled tasks are completely optional. And you certainly don’t need to have read every productivity book I just referenced.

You need one folder and one source document to start your first LLM Wiki. Here’s the setup:

Step 1: Create a folder in your Obsidian vault (or any folder on your computer, since the wiki is just markdown files):

llm-wiki-[your-topic]/

├── raw/

├── wiki/

│ ├── entities/

│ ├── concepts/

│ ├── sources/

│ ├── analysis/

│ └── timelines/

└── CLAUDE.mdStep 2: Write your CLAUDE.md. Start simple. Here’s a minimal version:

# CLAUDE.md

## Purpose

This wiki is about [your topic].

Build structured, interlinked markdown pages from the sources in raw/.

## Rules

- raw/ is read-only. Never modify source documents.

- wiki/ is yours to create and maintain.

- Every page starts with a 2-3 sentence summary.

- Link related pages with [[wikilinks]].

- Flag contradictions between sources.

- Update wiki/index.md on every ingest.Believe it or not, that’s a perfectly good starting schema. Over time, you can add entity lists, analysis frameworks, formatting rules, and vault-aware source paths as you learn what your wiki needs. The schema evolves. That’s part of the process.

Step 3: Drop one source document into raw/. An article, a PDF, a transcript. Whatever you’ve got.

Step 4: Open Claude Code in a terminal and tell it to ingest:

cd path/to/llm-wiki-your-topic && claudeThen say: “I added a new source to raw/. Please read it and update the wiki.”

Alternatively, use Claude Cowork to create a project, point it at the folder, paste your CLAUDE.md into custom instructions, and just start talking. No terminal needed.

Step 5: Watch the wiki pages appear in Obsidian. Check the graph view. See the connections forming.

That’s it. One source becomes a handful of pages. Two sources turn into a small crowd of cross-references. By the fifth, your graph view starts to look uncannily like it’s sprouting new branches overnight. And by the tenth, you’ll know exactly why I couldn’t stop at one.

💡 The part where the LLM Wiki changes how you think

I want to be honest about something. At first, the first wiki I built felt like a novelty. Cool graph view. Nice pages. Fun demo.

For me, the shift happened around the third ingest, when I asked a question that spanned multiple sources. The AI answered it instantly, citing wiki pages that had already done the synthesis. I didn’t wait for it to search. I didn’t hope it found the right chunks. The answer was already compiled.

That’s the word Karpathy uses, and it’s the right one. The LLM Wiki is compiled knowledge. RAG, by contrast, is interpreted on the fly. The difference feels like looking something up in an encyclopedia versus asking someone to read five books and summarize them while you wait.

Your questions compound. Over time, your reading compounds too. Even your conversations compound. And the tedious part (the cross-referencing, the consistency-checking, the “wait, didn’t that other article say something different?”) happens automatically.

Karpathy compared this to Vannevar Bush’s 1945 vision of the Memex: a personal knowledge store with associative trails between documents. Bush imagined it. Luhmann approximated it with index cards. Forte systematized it with folders. Ahrens refined it with atomic notes. Allen freed up the mental space for it. I’ve even explored what that associative, relationship-driven web looks like in my own WordPress plugin work.

Nevertheless, none of them solved the maintenance problem at scale. The AI does.

And you really can start with one folder, one source, and ten minutes.

Comments

2 responses

It’s so frustrating when you’ve got all these systems and they just don’t talk to each other! PARA sounds really promising for bringing some order to that chaos.

Nathan Wrigley pulled me in at the eleventh hour to fill Mark’s seat on This Week in WordPress 374, alongside Michelle Frechette and Mike Johnson.…